The 75th Block: Control-F(acebook)

If you were not affected by whatever the heck happened at Facebook, you're The Chosen Ones.

This week…

Whenever I say I’m “reading” something long—usually a wordy, dry, serious PDF document or book—more often than not, I really mean to say that I’m “scanning through” it. I don’t know why I feel like I had to clarify that but it’s not a secret that I’m a slow but sly reader: Keywording with Ctrl-F. No one’s perfect, right?

Here’s a selection of top stories on my radar (all of which I read in full), a few personal recommendations, and the chart of the week.

We need to talk about Facebook’s:

PR Guy, Andy Stone, by Chris Stokel-Walker for Input:

He appears to love bringing the fight to reporters and whistleblowers who dare criticize the company’s actions. A former political communications staffer, he’s been with Facebook since 2014, and has been directly rebutting claims from reporters — to their increasing anger — for at least a year.

Insiders-turned-critics, by Ina Fried for Axios:

Frances Haugen, the latest Facebook whistleblower, has been everywhere this week, from 60 Minutes to the Wall Street Journal to a Senate hearing. Haugen is only the latest critical Facebook alum in a long list that includes early employees, the former heads of WhatsApp as well as plenty of rank and file employees disturbed by the products the company has built and their impact on society.

Own internal documents that offer a blueprint for making social media safer for teens, by Jean Twenge for The Conversation:

The details in the 209 pages are revealing. They suggest not only that Facebook knew how Instagram could be harmful, but that the company also was aware of possible solutions to mitigate those harms. Facebook’s own research strongly suggests that social media should be subject to more stringent regulation and include more guardrails to protect the mental health of its users. There are two primary ways the company can do this: enforcing time limits and increasing the minimum age of users.

Algorithm and how to regulate them ($), according to the guy who designed Facebook’s algorithm Roddy Lindsay, for NYT:

The solution is straightforward: Companies that deploy personalized algorithmic amplification should be liable for the content these algorithms promote. This can be done through a narrow change to Section 230, the 1996 law that lets social media companies host user-generated content without fear of lawsuits for libelous speech and illegal content posted by those users.

As Ms. Haugen testified, “If we reformed 230 to make Facebook responsible for the consequences of their intentional ranking decisions, I think they would get rid of engagement-based ranking.” As a former Facebook data scientist and current executive at a technology company, I agree with her assessment. There is no AI system that could identify every possible instance of illegal content. Faced with potential liability for every amplified post, these companies would most likely be forced to scrap algorithmic feeds altogether.

Social media companies can be successful and profitable under such a regime. Twitter adopted an algorithmic feed only in 2015. Facebook grew significantly in its first two years, when it hosted user profiles without a personalized News Feed. Both platforms already offer nonalgorithmic, chronological versions of their content feeds.

This solution would also address concerns over political bias and free speech. Social media feeds would be free of the unavoidable biases that A.I.-based systems often introduce. Any algorithmic ranking of user-generated content could be limited to nonpersonalized features like “most popular” lists or simply be customized for particular geographies or languages. Fringe content would again be banished to the fringe, leading to fewer user complaints and putting less pressure on platforms to call balls and strikes on the speech of their users.

This tech millionaire went from COVID trial funder to misinformation superspreader

“After boosting unproven COVID drugs and campaigning against vaccines, Steve Kirsch was abandoned by his team of scientific advisers—and left out of a job,” writes Cat Ferguson for MIT Tech Review:

With little government funding available for such work, Kirsch founded the COVID-19 Early Treatment Fund (CETF), putting in $1 million of his own money and bringing in donations from Silicon Valley luminaries: the CETF website lists the foundations of Marc Benioff and Elon Musk as donors. Over the last 18 months, the fund has granted at least $4.5 million to researchers testing the COVID-fighting powers of drugs that are already FDA-approved for other diseases.

That work has yielded one promising candidate, the antidepressant fluvoxamine; other CETF-funded efforts have been less successful. But that’s not a surprise, according to researchers who conducted them: the vast majority of trials for any drug end in failure.

What has alarmed many of the scientists associated with CETF, though, are Kirsch’s reactions to the work he’s funded—both successes and failures. He’s refused to accept the results of a hydroxychloroquine trial that showed the drug had no value in treating COVID, for instance, instead blaming investigators for poor study design and statistical errors.

He’s also publicly railed against what he claims is a campaign against drugs like fluvoxamine and ivermectin. And, according to three members of CETF’s scientific advisory board, he put pressure on them to promote fluvoxamine for clinical use without conclusive data that it worked for COVID.

Steve Kirsch’s alma mater is the MIT.

How a small-town B.C. council meeting became a source of COVID-19 disinformation worldwide

Andrew Kurjata for CBC News:

The presentation in question was made by a woman who, in local small business listings, identifies herself as the owner of an “alternative clinic” that uses “energy healing” and “psychic readings,” along with herbal teas and essential oils, to help clients.

While presenting to council, she said she was a “molecular biologist,” without specifying her credentials. She falsely called the vaccines a “genetic experiment” with a high fatality rate, when the reality is they are the result of years of research and have been safely distributed to millions of people worldwide after passing multiple clinical trials.

At the end of the presentation, the mayor thanked the woman for her time, and she thanked him for hearing her out. And that, thought Bumstead, was the end of it. Council later voted to follow public health guidelines and adjourned.

Afterwards, city staff did what they do with every meeting: they uploaded it to the city’s YouTube page, where the woman’s presentation took on a life of its own.

“Interesting if true”: A factor that helps explain why people share misinformation

Mark Coddington and Seth Lewis look at a study by Sacha Altay et al. published on Digital Journalism and report for Nieman Lab:

In the end, the study sought to capture what motivates individuals to share news, all things being equal. And although people were more willing to share information they believed to be accurate, they were also clearly willing to share stories that were interesting-if-true. So, even though fake news was recognized by participants as being less accurate than true news (and therefore to some degree less relevant and shareworthy), the interesting-if-true factor complicated the calculation around sharing. It explained why “people did not intend to share fake news much less than true news.”

What I read, watch and listen to…

I’m reading Faisal Devji’s essay, What is the West? on Aeon.

I’m watching ABC’s Question Everything, the Australian “comedy news panel” co-hosted by Wil Anderson and Jan Fran, and featuring comedians who tackle misinformation in the media. Also includes a segment called Jansplain, which is like mansplain but by Jan, who is not a man, but a journalist.

I’m listening to CBC’s The Flamethrower, a miniseries on “how right-wing radio took over American democracy”.

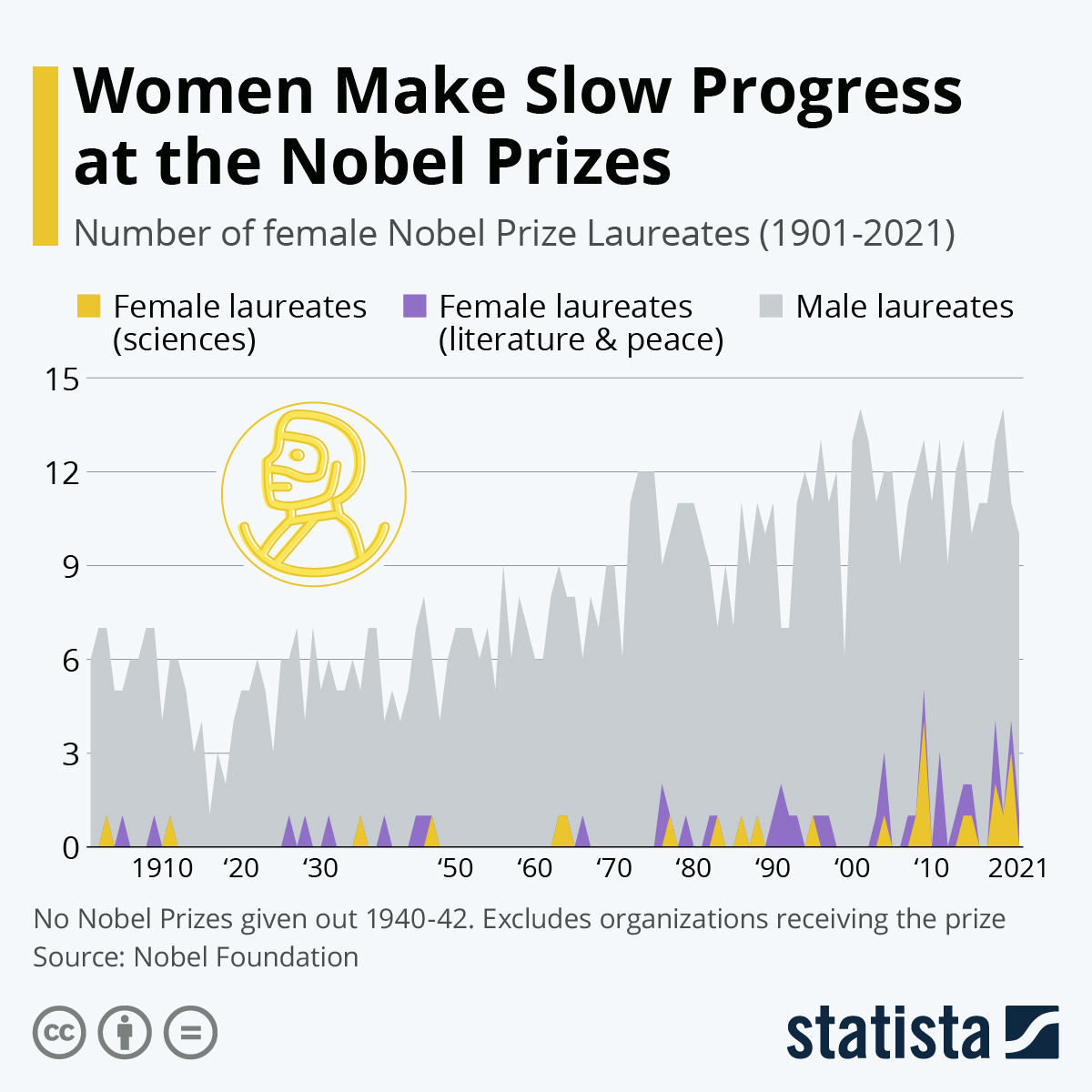

Chart of the week

Katharina Buchholz for Statista: