The 27th Block: 'Bye, Don

"The biggest problem with data is pure arrogance" – Mona Chalabi, data journalist.

‘Bye, Don.

The 2020 US election is really making up for the lack of spectator sports this year. It’s like calling the baseball championship the World Series yet only American teams are playing – except this one the world is actually invested. Don’t get me wrong, I love baseball (let’s go, Blue Jays!), and I love how heavily stats-driven the sport is.

That leads nicely into this week’s theme of data, statistics, and polls. People around me expressed shock and disappointment at how close the presidential race had been, “despite the polls showing otherwise.” Wishful desires for a ‘blue wave’ or ‘political tsunami’ in the lower house? I live inside a progressive Malaysian bubble but I come from a conservative background that I’m still very much in touch with. I’m immune to hypnotic, comforting, but false hopes.

There were also a couple of things that circulated on social media and the news that irked me, including reporting that ‘almost half of the US’ voted for Donald Trump. That’s not accurate; almost half of the 150 million US voters voted for Trump – that’s a fifth of the total US population of 330 million. Also, although unrelated to numbers, Van Jones cried crocodile tears. Here are some receipts. Senior producer at AJ+, Sana Saeed put it succinctly in a tweet: “They’re all gonna rewrite their support and enablement.” Now back to the lies, damned lies, and statistics!

Public election polls are 95% confident but only 60% accurate, UC Berkeley study finds (pre-print)

Aditya Kotak and Don Moore:

Our findings highlight the need for those communicating the results of election polls to adjust downward the level of confidence they claim, or to adjust upward the size of the reported confidence interval. If they wish to report well-calibrated confidence intervals, pollsters or reporters ought to report substantially wider confidence intervals. Current practice, which reports confidence intervals assuming that the only source of error is sampling error, overestimates the accuracy of polls.

The authors also said that overconfidence is not unique to political polls. “One of the most persistent biases in judgment is the excessive faith we know the truth, also known as overprecision in judgment.”

Stop refreshing that forecast

Sociologist Zeynep Tufekci who, if you remember, keeps getting the big things right (The 17th Block), wrote the day before the election about how she changed her mind about the value of forecasts because she was “naive and wrong about what would happen once modelling became mainstream.” Here’s her argument, taken in part:

One, the model predictions have just become part of the horse-race coverage. We just spend a lot of time arguing if the models are right.

Two, forecasts themselves are active participants in electoral politics and influence outcomes.

Three, I still don’t think the general public understands what election forecasts are, what they are not, and what they cannot ever be. I’m not sure there’s a way to overcome this problem. Big numbers still dominate our minds and it is cognitively difficult—really difficult—to truly internalise how much uncertainty is in a model.

She also shared an excerpt from her New York Times op-ed ($):

There’s an even more fundamental point to consider about election forecasts and how they differ from weather forecasting. If I read that there is a 20 per cent chance of rain and do not take an umbrella, the odds of rain coming down don’t change. Electoral modeling, by contrast, actively affects the way people behave.

In 2016, for example, a letter from the FBI director James Comey telling Congress he had reopened an investigation into [Hillary] Clinton’s emails shook up the dynamics of the race with just days left in the campaign. Mr. Comey later acknowledged that his assumption that Mrs. Clinton was going to win was a factor in his decision to send the letter.

[…]

Indeed, in one study, researchers found that being exposed to a forecasting prediction “increases certainty about an election’s outcome, confuses many, and decreases turnout.”

Tufekci also spoke on this on WNYC’s On The Media on November 6.

The polling crisis is a catastrophe for American democracy ($)

There was really no rationale in trusting the polls that said that Joe Biden had a comfortable lead, and not just because 2020 has been a weird year. It was a close match right to the end.

David A. Graham for The Atlantic, the day after voting day, when the election result was still far from clear:

Surveys badly missed the results, predicting an easy win for former Vice President Joe Biden, a Democratic pickup in the Senate, and gains for the party in the House. Instead, the presidential election is still too close to call, Republicans seem poised to hold the Senate, and the Democratic edge in the House is likely to shrink.

This is a disaster for the polling industry and for media outlets and analysts that package and interpret the polls for public consumption, such as FiveThirtyEight, The New York Times’ Upshot,and The Economist’s election unit. They now face serious existential questions. But the greatest problem posed by the polling crisis is not in the presidential election, where the snapshots provided by polling are ultimately measured against an actual tally of votes: As the political cliché goes, the only poll that matters is on Election Day.

The problem isn’t that the polls were wrong, it’s that they were useless

Joshua Keating for Slate, also written the day after voting day, before election results were called:

If Joe Biden ultimately wins, pollsters and the data journalists who rely on them will claim some vindication. To be fair, FiveThirtyEight’s final projections gave Biden less than a 1-in-3 chance of a landslide. The popular vote projections are likely to be pretty accurate. Yet, frustratingly, pollsters can also marshal a defence of their methods if Trump manages a surprise win.

[…]

As former FiveThirtyEight writer Mona Chalabi put it, assuming the voice of FiveThirtyEight’s much-derided Fivey Fox mascot, “No matter what happens, I will find a way to say ‘I told you so! That’s how probabilities work!’ ”

It’s not that we should stop trusting polls entirely. They are a flawed but vital tool for campaigns to know where to devote resources, and for campaign journalists to use in reporting. But an entire industry of pundits and soothsayers have turned polling analysis into something more like a religion while proclaiming it a science. Meanwhile, it is increasingly unclear why these projections are useful at all.

Facebook has good reasons for blocking research into political ad targeting

In unrelated news, an update on the NYU research on Facebook’s political ad targeting.

John Naughton for The Guardian:

The researchers had built a plug-in extension for the Google Chrome browser that, they explained, “copies the ads you see on Facebook, so anyone, on any part of the political spectrum, can see them in our public database. If you want, you can enter basic demographic information about yourself in the tool to help improve our understanding of why advertisers targeted you. However, we’ll never ask for information that could identify you. It doesn’t collect your personal information. We take your privacy very seriously.”

So what was the problem? Basically this: the extension has access to not simply public posts on Facebook but also to whatever content the user accessed while logged in. This would include their personal data, of course, but, as is likely in the case on many Facebook pages, also some data from the user’s friends.

The problem, Naughton laid out, is that Facebook was forced to pay a US$5 billion fine and sign up to a consent decree to specify how it protects user data. The company isn’t necessarily trying to protect its users’ data, it’s trying to protect itself. Also:

The unusual thing about the controversy is that it’s one in which both sides have arguable cases. Facebook is trying to comply with a regulation introduced after it made an expensive mistake. The NYU researchers, unlike unscrupulous web-scrapers such as Clearview, are trying to do something useful for democracy.

What I read, watch and listen to…

I’m reading Maria Popova’s Lying in Politics: Hannah Arendt on Deception, Self-Deception, and the Psychology of Defactualisation.

I’m listening to The Guardian’s Can We Trust The Polls? published two weeks before election day. Anushka Asthana and Mona Chalabi discuss how polls are carried out, who participates in these polls, what information polls cannot capture, and how journalists use polling data. If there is one thing you listen to this week, let this be it.

I’m looking at this brilliant shot by AP’s Evan Vucci:

Chart of the week

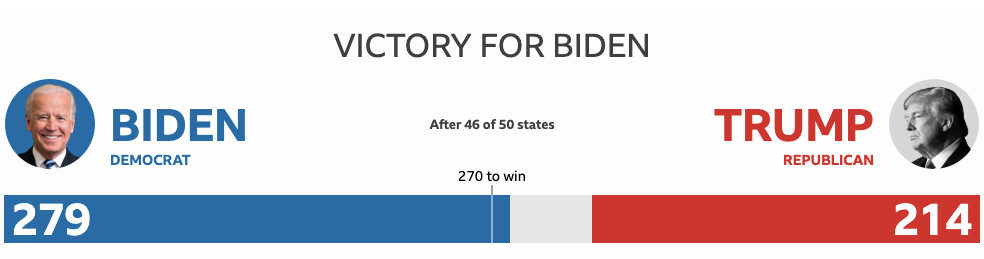

From the BBC early Sunday Malaysian time, the long drawn out 2020 season finale: