The 159th Block: AI chatbot misinformation, and the potential misuse of consumer data

Plus, test your content moderation skills at end of this issue

This week…

The essay portion is in The Extra Mile because it’s extra long, approximately 16 minutes of reading time. Consider it an expanded version of The 156th Block.

The Extra Mile: Artificial intelligence, academic integrity, and AI literacy

Attribution? Important.

And now, a selection of top stories on my radar, a few personal recommendations, and the chart of the week.

College instructor put on blast for accusing students of using ChatGPT on final assignments

Uwa Ede-Osifo for NBC News:

Texas A&M University–Commerce said it is investigating after a screenshot of an instructor's email — in which he accused students of having used artificial intelligence on their final assignments — went viral on Reddit.

Jared Mumm, an instructor in the agricultural sciences and natural resources department, reportedly told students that they would be receiving an “X” in the course after he used “Chat GTP” (referring to the AI chatbot actually known as ChatGPT) to determine whether they’d used the software to write their final assignments. He said that he tested each paper twice and that the bot claimed to have written every single final assignment.

India’s religious AI chatbots are speaking in the voice of god — and condoning violence

Nadia Nooreyezdan for Rest of World:

Rest of World found that three of the Gita chatbots held strong opinions on India’s prime minister, Narendra Modi, whose Bharatiya Janata Party has close links to right-wing, Hindu nationalist group Rashtriya Swayamsevak Sangh. While the chatbots praised Modi, they criticized his political opponent, Rahul Gandhi. Anant Sharma’s GitaGPT declared Gandhi “not competent enough to lead the country,” while Vikas Sahu’s Little Krishna chatbot said he “could use some more practice in his political strategies.”

Telehealth start-ups are monetizing misinformation – and your data

Rebekah Robinson for Coda Story:

The mere fact that these companies deal with people’s health data might make customers think that it will be covered by HIPAA, the U.S. federal law that requires healthcare and insurance providers to protect sensitive health information from being disclosed without patient consent. But just because you’re sharing your health data does not mean it’s protected. In fact, Hims & Hers’ privacy policy mentions that it is not a “covered entity” under HIPAA. This suggests that the company is collecting demographic data and medical information, as well as images and messages, all on behalf of the diagnosing providers and with no guarantee of privacy protection under U.S. law. We asked Hims & Hers for more information about their business and how they handle customer data but did not receive a response prior to publication.

What happens to your data after it is collected? Researchers have shown that it can be bought and sold by third-party data brokers. Last year, The Markup reported that private information about the medications prescribed through telehealth services (Hims & Hers was among those they tested) had been shared with Big Tech companies like Meta, Google and Snapchat. This data is often used to improve ad-targeting and prompt customers to purchase even more products or services based on their browsing habits. But it could be used or abused in other ways, too.

What I read, listen, and watch…

I’m reading an interactive piece by Caroline Sinders, with illustrations by Tynesha Foreman and design/code by Matt Daniels in The Pudding, about the challenges of unsubscribing from online services.

I’m listening to “When AI hears a problem,” an episode of MIT TR’s podcast, In Machines We Trust, reported by Hilke Schellmann.

I’m watching D’Angelo Wallace’s assessment of the New York Times’ article on Theranos’ Elizabeth Holmes that was “such an affront to journalism” for its attempt to rehabilitate the reputation of white-collar criminals.

Reviews, opinion pieces, and other stray links:

What’s a Luddite? An expert on technology and society explains by Andrew Maynard for The Conversation.

The New York Times launches “enhanced bylines,” with more information about how journalists did the reporting by Hanaa’ Tameez for Nieman Lab.

¿Y si la inteligencia artificial no es el apocalipsis? “Es como decir que viene el coco” ($) por Jordi Pérez Colomé en El País.

Les images générées par IA et le risque de réécrire l’histoire par William Audureau dans Le Monde.

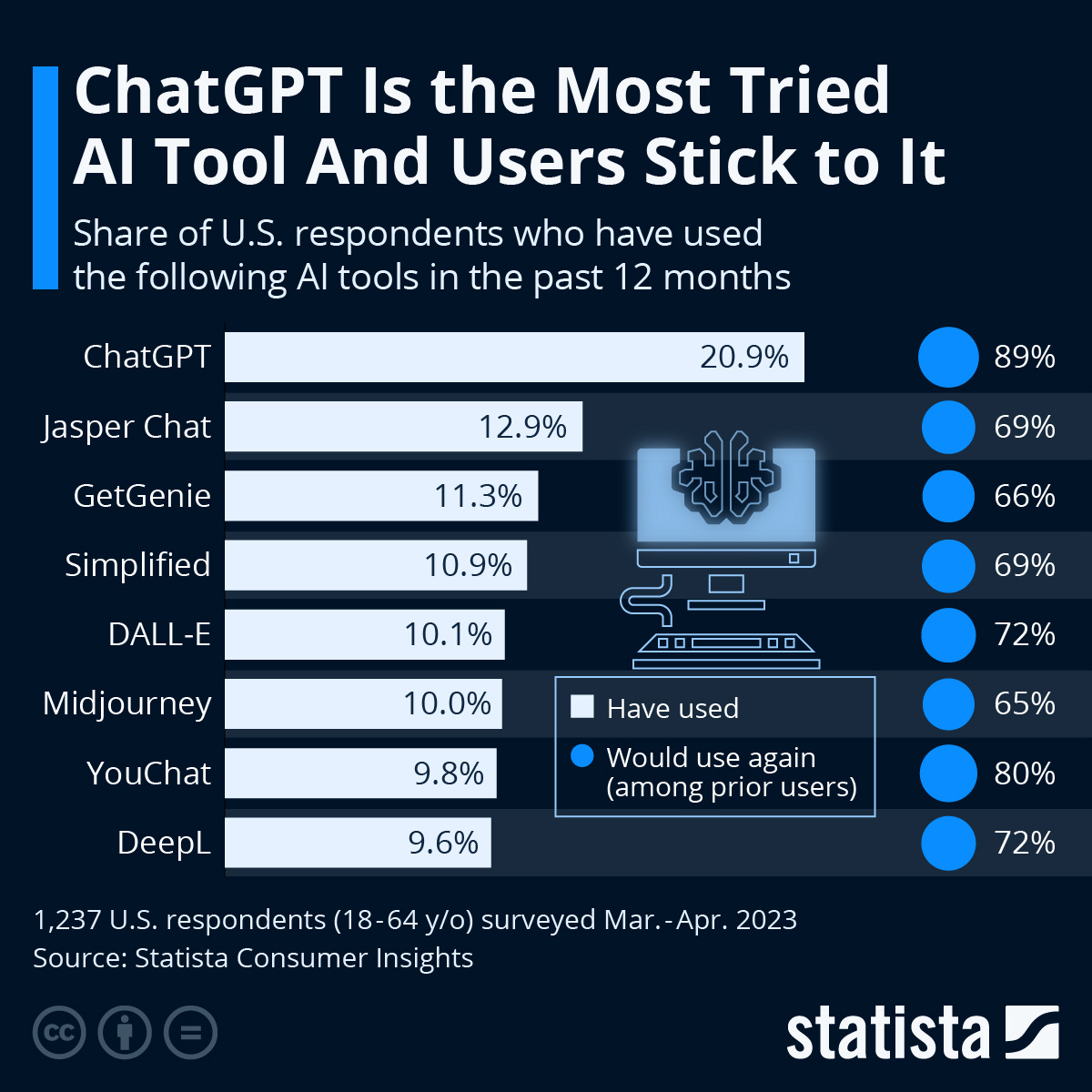

Chart of the week

According to a survey conducted by Statista Consumer Insights, 20 per cent of 1,237 US respondents between 18 and 64 years old had tried ChatGPT by the time the survey was fielded in March and April 2023. Felix Richter reports for Statista:

And one more thing

Play “Moderator Mayhem,” a browser-based mobile game where you can test how well you handle content moderation based on the policies of a fictional company. The game is developed by Mike Masnick, Randy Lubin, and Leigh Beadon and built by Copia Gaming and Leveraged Play.